The friction between the Department of Defense (DoD) and Anthropic reveals a structural misalignment between the rapid iteration cycles of private frontier AI labs and the rigid requirements of national security procurement. This is not a simple bureaucratic delay; it is a fundamental collision between the stochastic nature of Large Language Models (LLMs) and the deterministic requirements of military systems. When a private entity like Anthropic, backed by billions in venture and corporate capital, enters the defense orbit, the primary point of failure is rarely the technology itself. Instead, the bottleneck exists in the "Control-Innovation Paradox": the more control the Pentagon demands over model weights and alignment logic, the less effectively the model can utilize the high-frequency updates that define its competitive edge.

The Three Pillars of the Structural Standoff

To understand why this dispute persists, we must categorize the disagreement into three distinct layers of operational friction.

1. The Weight-Sharing Conflict (Intellectual Property vs. Oversight)

Anthropic’s business model relies on the secrecy of its proprietary training methodologies and model weights. For the Pentagon, however, "black box" systems are a liability. National security protocols often require White-Box Access, where the government can audit the underlying architecture to ensure no backdoors or "sleeper agents" (latent behaviors) exist within the weights.

2. The Compute Sovereignty Problem

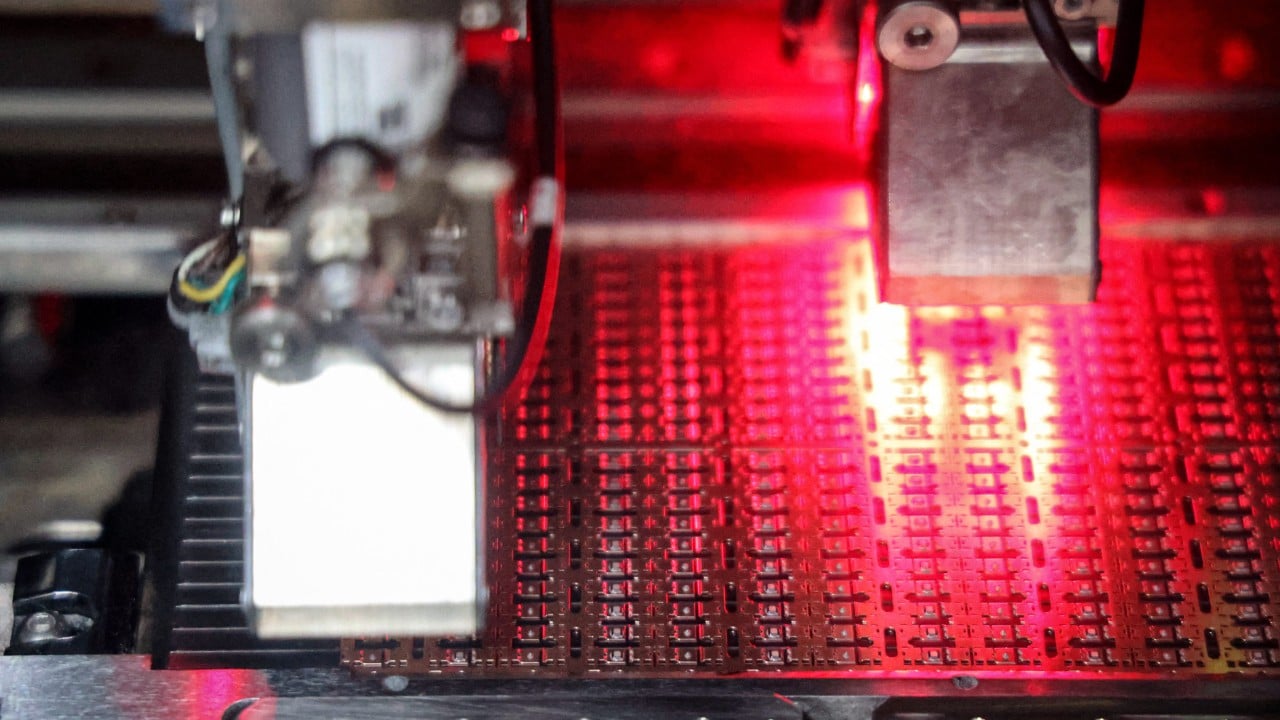

Anthropic utilizes massive, centralized cloud clusters (primarily via AWS and Google Cloud). Military operations require Edge Deployment or Air-Gapped Sovereign Clouds. Porting a frontier model like Claude 3.5 into a restricted environment—while maintaining its performance—introduces a massive technical debt. The "Inference Decay" that occurs when a model is severed from its native, hyper-optimized infrastructure makes it less useful for the very mission-critical tasks the Pentagon envisions.

3. Alignment Divergence

Anthropic’s "Constitutional AI" is designed to minimize harm based on a specific set of human-centric, safety-first principles. Military applications, conversely, may require models to calculate kinetic outcomes, analyze lethality, or assist in offensive cyber operations. The "Safety Layer" that Anthropic has built to prevent misuse is, in a tactical context, a functional restraint that the DoD views as an impediment to mission success.

The Cost Function of Model Sovereignty

The Pentagon's pursuit of a customized, controlled version of Anthropic’s technology carries a hidden tax. We can quantify this through the Model Obsolescence Rate. In the current AI arms race, a model’s peak utility decays by approximately 20-30% every six months as newer architectures emerge.

The traditional "Program of Record" (PoR) in defense procurement takes three to seven years to move from concept to deployment. If the Pentagon forces Anthropic into a standard procurement cycle, the model will be functionally obsolete before it reaches the end-user. This creates a Capability Gap where adversarial nations, utilizing unaligned or less-regulated open-source models, can iterate at 10x the speed of the U.S. bureaucratic apparatus.

This friction is exacerbated by the Incentive Asymmetry:

- Anthropic's Incentive: Maintain a single, global "gold standard" model to minimize maintenance costs and maximize safety research.

- The Pentagon's Incentive: Create a "forked" version of the model that is physically isolated, uniquely tuned for defense datasets, and entirely under government jurisdiction.

Tactical Vulnerabilities in the Current Framework

The current standoff highlights a failure to define the Minimum Viable Trust (MVT) required for AI deployment in high-stakes environments. Without a standardized framework for "Red Teaming" that satisfies both the lab’s IP concerns and the government’s security concerns, we see a recursive loop of testing that yields no deployment.

The "Red Teaming" process currently lacks a quantitative baseline. Is a model "safe" if it refuses 99% of harmful queries, or is the 1% failure rate an unacceptable risk in a nuclear or biological context? The Pentagon’s inability to define a Threshold of Acceptable Stochasticity means that every model update becomes a new negotiation, stalling the integration of AI into the "Kill Web"—the interconnected sensor-to-shooter network the military is building.

The Logic of the Third Way: Middle-Tier Integration

The impasse suggests that the binary choice between "Standard Commercial Claude" and "Government-Owned Claude" is a false dichotomy. A more logical framework involves Federated Fine-Tuning.

In this model, Anthropic maintains the base model weights, but the Pentagon hosts a "Security Adapter" layer. This allows the government to tune the model on classified data without the data ever leaving the secure environment or the base weights being exposed to the government. This Decoupled Architecture solves the IP dispute but introduces a new technical challenge: Latency Jitter. If the safety checks and the tactical adapters are processed in different environments, the time-to-answer increases, which is unacceptable in electronic warfare or missile defense scenarios.

The Geopolitical Risk of Perfectionism

While the U.S. debates the ethics and control of Anthropic’s models, the global landscape is shifting toward Unconstrained AI. Adversaries are not bound by "Constitutional AI" or the need for commercial profitability. This creates a "Security Dilemma" where the U.S. quest for a perfectly controlled and safe AI leads to a relative loss in strategic power.

The mechanism at play here is Strategic Atrophy. By denying soldiers access to frontier models due to unresolved control disputes, the DoD prevents the development of "AI Literacy" within the ranks. Technology is not just about the code; it is about the operational doctrine that grows around it. Every month of delay in the Anthropic-Pentagon relationship is a month of lost doctrinal evolution.

Defining the Path Forward: The Operational Playbook

To resolve the friction, the strategy must shift from a "Control First" to a "Validation First" mindset. This requires three immediate technical and policy shifts:

1. Hardware-Level Verification

Instead of demanding the model weights, the Pentagon should invest in Trusted Execution Environments (TEEs) at the chip level. If the model runs within a secure enclave on NVIDIA or Groq hardware that the government trusts, the need to audit the software code itself is diminished. This protects Anthropic’s IP while giving the DoD hardware-level certainty that data is not being exfiltrated.

2. Dynamic Certification

The "Authority to Operate" (ATO) must evolve from a static document to a continuous stream. Much like "DevSecOps" revolutionized software deployment, a "ModelOps" framework would allow Anthropic to push updates to a government-shadow environment for automated testing against a battery of classified benchmarks. If the model passes the benchmark, the ATO is automatically renewed.

3. The "Tiered Use-Case" Strategy

The dispute is often treated as a monolith, but the requirements for an LLM summarizing logistics reports are vastly different from one assisting in targeting. The Pentagon should bifurcate its demands:

- Tier 1 (Non-Kinetic): Use standard commercial APIs with basic data encryption.

- Tier 2 (Intelligence): Use "Sovereign Cloud" instances with limited weight-sharing.

- Tier 3 (Kinetic/Tactical): Move toward government-funded, custom-built "Small Language Models" (SLMs) that are trained from scratch on military data, bypassing the Anthropic alignment conflict entirely.

The strategic play is to stop trying to force a "General Purpose" model into a "Specific Purpose" cage. The Pentagon must accept that it will never have 100% control over a frontier model like Claude without destroying the very agility that makes the model valuable. The solution lies in building the infrastructure to wrap these models in a "Safety Sandbox" that is owned and operated by the military, allowing the underlying intelligence to be upgraded at the speed of the commercial market while the constraints remain under sovereign command.