The British government is shifting its counter-disinformation strategy from the shadows of Whitehall directly into the YouTube algorithm. This isn't just about fact-checking or debunking viral myths after they have already taken root. Instead, the Cabinet Office is bankrolling a sophisticated campaign to reach "at-risk" demographics before they ever click on a conspiracy-laden rabbit hole. By recruiting influencers and deploying targeted ad buys, the state is attempting to win a war for reality on a platform that has historically been the breeding ground for its fiercest critics.

The core of this initiative involves "pre-bunking." It is a psychological tactic designed to inoculate the public against common manipulation techniques. Rather than chasing every lie about tax hikes or immigration numbers, the government wants to teach citizens how to spot the emotional triggers and logical fallacies that disinformation peddlers use. It is a radical departure from the traditional "official statement" model, acknowledging that the public trust in institutional voices is at an all-time low. If people won’t listen to a minister in a suit, perhaps they will listen to a creator they already follow.

The Failure of Traditional Fact Checking

For years, the response to online falsehoods was reactive. A lie would travel halfway around the world while the Civil Service was still drafting a press release. By the time a formal correction was issued, the original narrative had already calcified in the minds of millions. This "whack-a-mole" strategy failed because it ignored the fundamental way people consume information online.

Algorithms prioritize engagement over accuracy. Anger, fear, and outrage are the highest-performing currencies on social media. When the government tries to counter these emotions with dry data, it is bringing a knife to a gunfight. The new YouTube drive recognizes that the battle isn't about facts; it's about narrative dominance and the speed of delivery.

The Rise of the Pre-Bunking Model

Pre-bunking operates on the principle of inoculation. In medical terms, you expose a person to a weakened version of a virus to build their immunity. In the information space, the government shows users the "anatomy of a lie." This might involve short videos that explain "fear-mongering" or "false dichotomies" using neutral, non-political examples.

The goal is to create a mental filter. When a user later encounters a genuine piece of disinformation, their brain recognizes the pattern of manipulation rather than just the content of the claim. It is a proactive defense mechanism. However, the move from neutral educational content to state-sponsored messaging is a narrow path to walk. Critics argue that the government itself is a biased actor, and having the state decide what constitutes a "manipulation technique" is inherently problematic.

Influencers as the New Civil Servants

The most controversial element of this campaign is the use of third-party creators. The Cabinet Office has realized that the messenger matters as much as the message. To reach young men, skeptical hobbyists, or isolated communities, the government is bypassing traditional media and partnering with YouTubers who already have established rapport with these audiences.

This creates a strange new dynamic in the creator economy. YouTubers, once seen as the ultimate outsiders, are being drafted as unofficial spokespeople for the state. This isn't always transparent. While ad rules require "Paid Partnership" disclosures, the editorial influence behind the scenes can be subtle. The government provides the briefing materials and the psychological framework; the creator provides the "authentic" voice.

The Trust Gap

There is a glaring irony at the heart of this strategy. The very people the government is trying to reach are those who typically distrust the government. When a skeptical viewer finds out their favorite creator is being paid by the Cabinet Office to "educate" them on how to think, the result can be a massive backlash. It can actually validate the "keyboard warriors" the government is trying to silence.

The Mechanics of Social Engineering

This isn't just a marketing campaign; it is a massive exercise in behavioral science. The government is utilizing the same tools that advertisers use to sell soap or sneakers to sell "truth." They are targeting specific postcodes, age groups, and search histories. If you have been watching videos about alternative medicine or sovereign citizenship, you are more likely to see a government-backed "pre-bunking" ad.

This level of granular targeting by the state should give any civil liberties advocate pause. We are seeing the infrastructure of the surveillance state being repurposed for psychological intervention. While the intent might be to protect the democratic process from foreign interference or domestic radicalization, the tools themselves are agnostic. They can be used to promote a healthy skepticism of lies, or they can be used to marginalize legitimate dissent.

The Algorithm Problem

YouTube’s recommendation engine is a black box. The government is essentially trying to "hack" this system to ensure their content is seen. This involves significant spending on "TrueView" ads—the ones you can't skip or the ones that appear in your sidebar. By injecting their narrative into the stream of content, the government is attempting to break the "filter bubbles" that keep people trapped in echo chambers.

The problem is that the algorithm is designed to keep you on the platform, not to keep you informed. If a pre-bunking video makes a user feel lectured or patronized, they will click away. This forces the government to make its content more "entertaining," which often leads to a thinning of the actual substance. We are left with high-production-value "infotainment" that mimics the aesthetics of the very conspiracists it seeks to debunk.

Foreign Influence vs. Domestic Dissent

The official justification for this YouTube push is often the threat of foreign "bot farms" and state-backed disinformation from actors like Russia or China. This is a real and documented threat. Foreign intelligence services use YouTube to sow discord and weaken the social fabric of the UK.

However, the government’s definition of "disinformation" has a habit of expanding to include domestic political opposition. During the pandemic, the "Counter Disinformation Unit" was criticized for monitoring the social media posts of journalists and academics who questioned lockdown policies. When the state takes on the role of the ultimate arbiter of truth, the line between protecting the public and policing thought becomes dangerously thin.

The Keyboard Warrior Myth

The government’s rhetoric often paints a picture of the "keyboard warrior" as a lonely, radicalized individual in a basement. This is a convenient caricature. In reality, the people skeptical of government narratives are often engaged citizens who feel abandoned by traditional institutions. They aren't necessarily "misinformed"; they are "unpersuaded."

A drive to fight "keyboard warriors" ignores the underlying reasons why people lose faith in the state. If the government’s actions don’t match their words, no amount of pre-bunking or influencer partnerships will fix the trust deficit. You cannot "correct" a lack of credibility with a better YouTube strategy.

The Cost of the Information War

Millions of pounds of taxpayer money are flowing into this digital infrastructure. This money goes to creative agencies, data analysts, and the tech platforms themselves. Essentially, the British taxpayer is paying YouTube to show them government-approved messages on how to consume information.

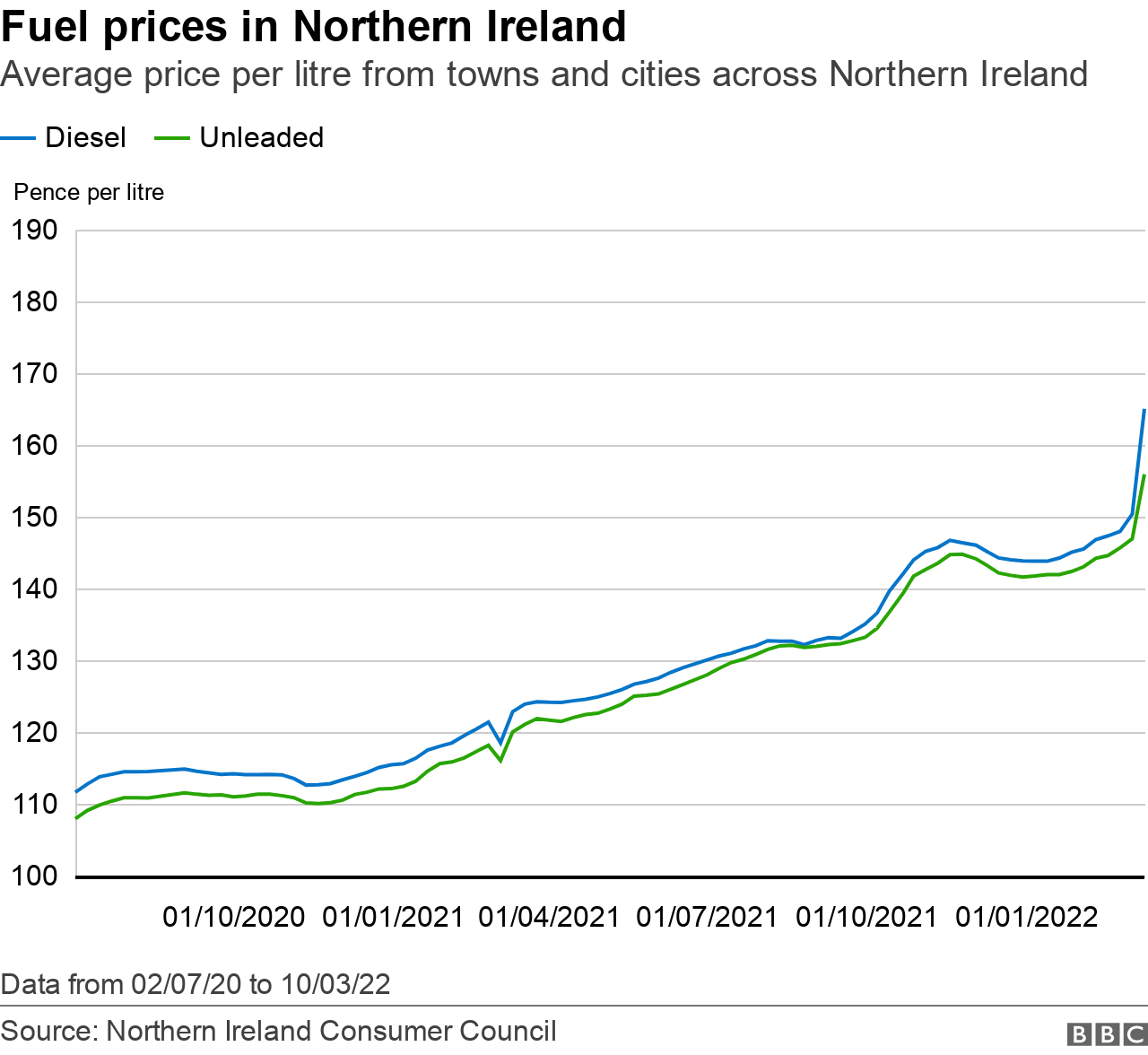

We must also consider the "opportunity cost" of this focus on narrative. While the government spends its energy on how things are perceived, the actual issues—the cost of living, the state of the NHS, the housing crisis—remain the primary drivers of public frustration. Disinformation thrives in the gaps between government promises and the reality of people’s lives.

The New Digital Literacy

There is a version of this program that is genuinely beneficial. Teaching digital literacy—how to verify sources, how to check for doctored images, how to understand funding behind a think tank—is a vital skill for the 21st century. When these programs are run through independent educational bodies rather than the Cabinet Office, they carry much more weight.

The danger arises when "literacy" becomes a shorthand for "agreeing with the official line." True critical thinking involves questioning the government just as much as it involves questioning a random post on X. If the YouTube drive doesn't encourage that level of broad skepticism, it isn't education; it's just another form of messaging.

The Technological Arms Race

As the government ramps up its YouTube presence, the creators of disinformation are also evolving. They are moving to decentralized platforms like Telegram or using AI-generated deepfakes that are increasingly difficult to detect. The "pre-bunking" videos of today will be obsolete by tomorrow as the techniques of manipulation become more sophisticated.

The government is playing a perpetual game of catch-up. Every time they identify a new trope, the "keyboard warriors" pivot. This leads to a cycle of increased spending and more intrusive monitoring as the state tries to keep its grip on the public discourse.

The Role of the Platforms

YouTube itself sits in a comfortable position. They receive the government’s ad revenue while simultaneously profiting from the high-engagement "controversial" content that the government is trying to fight. The platforms have very little incentive to actually solve the problem of disinformation because the conflict generates views.

Instead of pressuring platforms to change their underlying business models—which prioritize addiction and polarization—the government is simply becoming another player in the attention economy. They are competing for eyeballs alongside the very conspiracists they claim to despise.

Accountability and Oversight

Who watches the watchers? There is currently very little transparent oversight of how the Cabinet Office selects its "targets" for these campaigns or how it measures success. If a campaign fails to change minds, does the government double down on the spending? If a campaign "works," does that mean people are more informed, or just more compliant?

The records of these digital interventions should be open to public scrutiny. We need to know which influencers were approached, what they were told to say, and how much they were paid. Without this transparency, the government’s drive to fight "shadowy" disinformation is itself a shadowy operation.

The Pivot to Narrative Control

The shift toward YouTube represents a quiet admission that the traditional ways of governing are no longer sufficient to maintain order in a hyper-connected society. The state can no longer rely on the "Bully Pulpit" of the evening news. It must now enter the messy, chaotic world of the comments section.

This isn't just a change in medium; it's a change in the nature of power. Power used to be about the ability to pass laws and enforce them. Now, power is increasingly about the ability to manage perception and dictate the "vibes" of the national conversation. The YouTube drive is the most visible manifestation of this new, psychological form of governance.

The battle for the British mind isn't happening in Parliament. It’s happening in the "Skip Ad" button and the "Up Next" sidebar. By the time you realize you're being "pre-bunked," the narrative has already been set. The government has stopped trying to win the argument and has started trying to prevent the argument from happening in the first place.

Stop looking for the truth in the official press releases and start looking for it in the metadata of your recommendations. The most effective way to resist manipulation isn't to watch a government video on how to think; it's to understand why the government is so desperate for you to watch it.